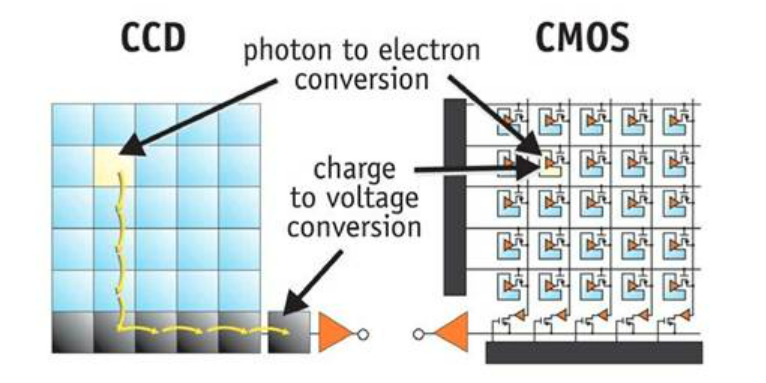

The discussion of whether to choose a camera with a CCD or CMOS sensor is practically moot at this point. CCDs (Charge-Coupled Device) were the norm up until a little more than a decade ago. They offered better sensitivity and generated less noise in the signal (think of it as static) than their CMOS counterparts – this was all the result of the sensor architecture and how the data was transferred. For even greater sensitivity, CCDs could be intensified (called ICCDs) to further amplify the signal. ICCDs are still in use today and are suitable for very low light applications such as fluorescence and luminescence. One last note about CCDs is that Sony discontinued production of CCDs in 2017, focusing their development efforts on CMOS technologies. Other companies still manufacture CCDs, but attention has definitely shifted towards CMOS.

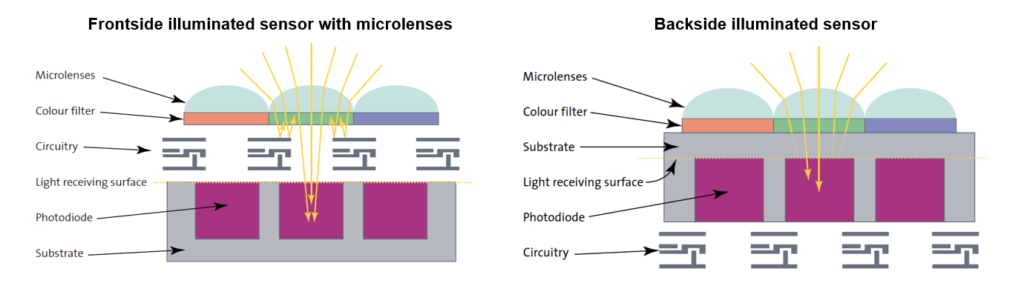

CMOS sensors are generally less expensive to manufacture and rapidly found popularity in consumer cameras, cell phones and security cameras. Due to their physical architecture, CMOS sensors lacked the sensitivity of CCDs. More recently, manufacturers found that by flipping the architecture upside-down and putting the circuitry below the photodiode, sensitivity was dramatically improved, and electronic noise reduced as a result. This CMOS sensor architecture is referred to as back-illuminated CMOS (Figure 9).

Although both CCD and CMOS sensor types are suitable for most imaging applications, the recent trend is toward CMOS. The latest advancements in CMOS technology also makes it difficult to recommend a camera based solely on the camera sensor architecture (CCD vs. CMOS), and we suggest you consider other camera features that may be relevant for your individual application.

The next part this series will review noise control in cameras through cooling, and how much you need in your microscopy camera.